OpenClaw Architecture: 8-Tier Routing & Sandbox Deep Dive

How OpenClaw routes messages across Discord, Telegram, and Slack with an 8-tier priority cascade, then isolates agent execution in pluggable Docker/SSH sandboxes.

OpenClaw Architecture: 8-Tier Message Routing and Sandbox Isolation

TL;DR: OpenClaw is a 365K-star open-source personal AI assistant that runs on your own hardware and connects to the messaging platforms you already use — Discord, Telegram, Slack, WhatsApp, and more. Under the hood, its 8-tier message routing system decides which agent handles each incoming message using a priority cascade from exact user match down to platform-wide fallback. Once routed, agent code executes inside pluggable sandboxes (Docker or SSH) with 4-layer security that blocks path traversal, credential leaks, and privilege escalation. This article walks through both systems with real code and config examples from the OpenClaw repository.

Key Takeaways

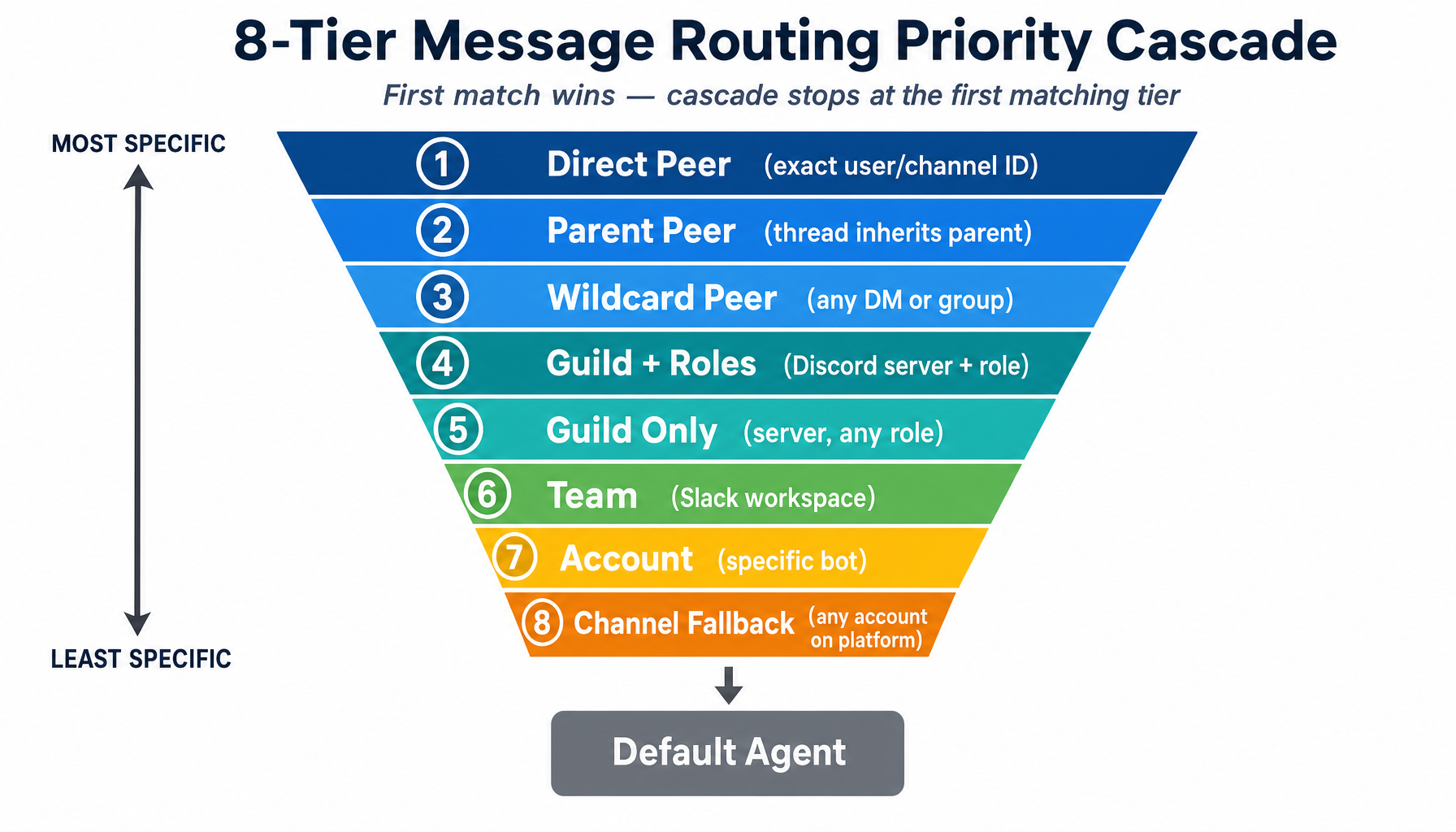

- OpenClaw's 8-tier routing cascade resolves which agent handles a message by checking the most specific match (exact user ID) first and falling through to broader matches (platform-wide default), with source order as the tiebreaker within a tier.

- Thread inheritance (Tier 2) automatically routes Discord/Slack threads to the same agent as their parent channel — without it, every thread would fall to the default agent.

- A 3-layer caching system (agent lookup → evaluated bindings → resolved routes) keeps routing fast with WeakMap-keyed caches that garbage collect automatically on config change.

- The sandbox system uses a pluggable backend architecture: Docker and SSH ship built-in, but any backend can register via `registerSandboxBackend()` and implement the same `SandboxBackendHandle` interface.

- Container security enforces 4 layers simultaneously: environment variable sanitization, bind mount validation, container hardening (read-only root, cap-drop ALL), and filesystem bridge guards with path traversal rejection.

- Config drift detection compares SHA-256 hashes of sandbox config against running containers, warning before recreating hot containers and silently recycling idle ones.

Why OpenClaw's Routing Matters

Imagine you run OpenClaw as your personal AI hub. You have a Telegram bot for personal chats, a Discord bot across 3 servers, a Slack bot at work, and a WhatsApp link for family. Each conversation might need a different agent — your "work" agent with code tools, your "home" agent for casual chat, a "gaming" agent with a different personality.

When a message arrives, OpenClaw must instantly decide: which agent handles this? The answer is an 8-tier priority cascade.

The 8-Tier Routing Cascade

The routing system works like an if/else if/.../else chain where the first match wins. The tiers go from most specific (an exact person) to least specific (an entire platform):

The core routing code iterates through a single array of tier objects, each with a predicate:

Within a tier, source order matters — if two bindings both match at the same tier, the one listed first in config wins.

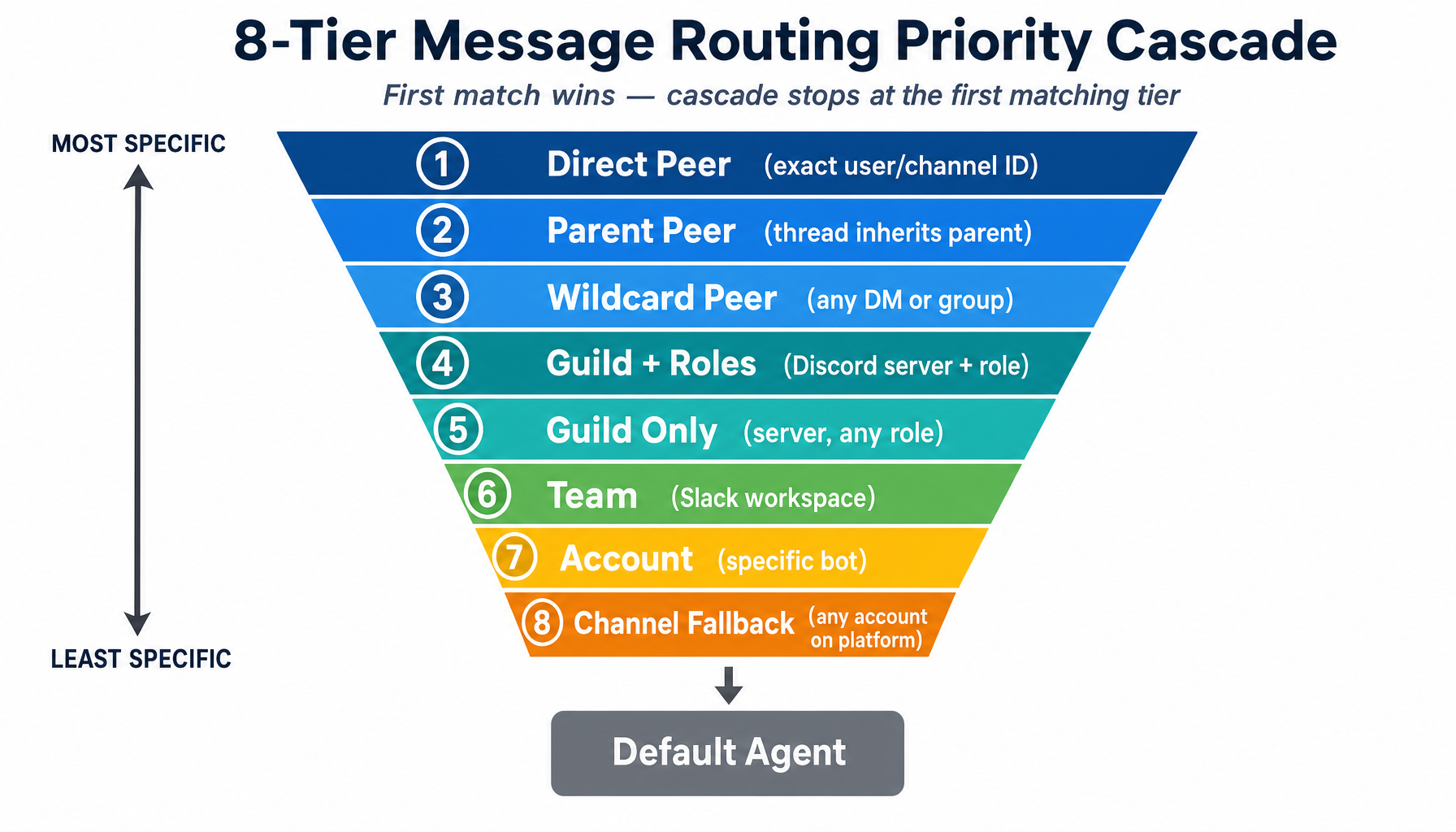

Real-World Example: A Discord Server

Suppose you configure OpenClaw for a Discord community with three agents:

Here's what happens for different messages:

- Message from #code-review: Tier 1 matches peer ID → `opus-coder`

- @PremiumUser in #general: Tiers 1-3 miss, Tier 4 matches guild + role → `opus-coder`

- @FreeUser in #general: Tiers 1-4 miss, Tier 5 matches guild → `sonnet-chat`

- DM with the bot: Tiers 1-8 all miss → default `haiku-mod`

Thread Inheritance and Wildcard Peers

Thread Inheritance (Tier 2) is subtle but essential. Discord and Slack threads spawn from parent channels. Without Tier 2, every thread would fall to the default agent. With it, threads automatically inherit their parent channel's agent binding.

Wildcard Peers (Tier 3) use id: "*" to match any peer of a given kind, but they respect kind boundaries:

A DM from @alice (kind=direct) matches direct:* and routes to personal. A group chat "Book Club" (kind=group) does NOT match — preventing accidental routing of group chats to a private agent.

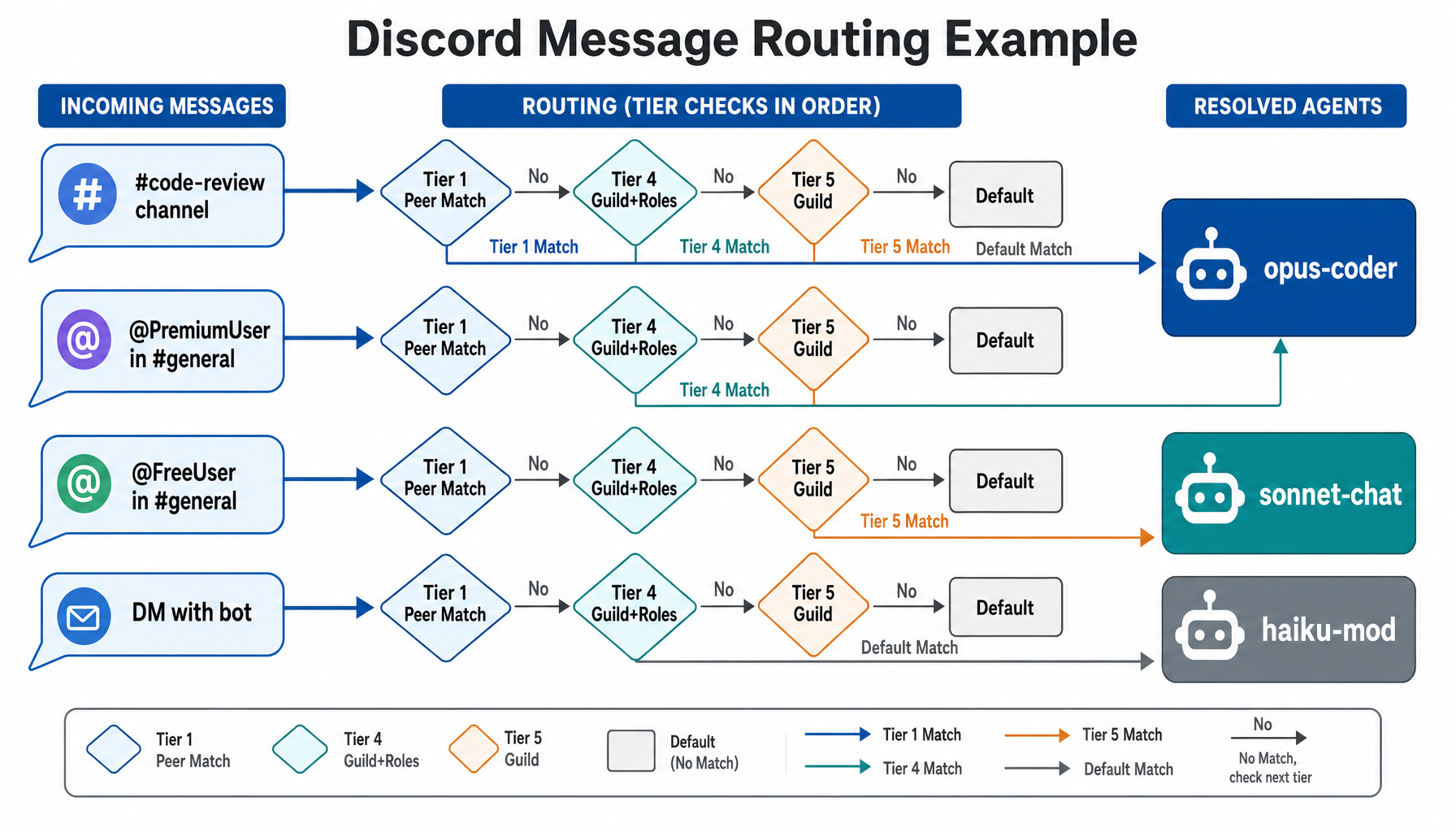

The 3-Layer Caching System

Every single message hits the routing logic, so it must be fast. OpenClaw uses three cache layers:

- Layer 1 — Agent Lookup Cache: Maps normalized agent ID to agent config. Invalidated on agents config change.

- Layer 2 — Evaluated Bindings Cache (max 2,000 entries): Pre-indexes bindings into maps (`byPeer`, `byGuildWithRoles`, `byTeam`, `byAccount`, `byChannel`). Invalidated on bindings config change.

- Layer 3 — Resolved Route Cache (max 4,000 entries): Caches the full resolution result. Key is all routing parameters joined by tab characters. Invalidated on any config change.

All caches are WeakMap-keyed on the config object — when config is replaced (e.g., hot-reload), old caches are garbage collected automatically. When a cache exceeds its size limit, it clears entirely and re-seeds with the current entry. Simple and effective — no LRU bookkeeping needed since config changes are infrequent.

Pluggable Sandbox Isolation

When an AI agent can execute shell commands, read files, and browse the web, isolation is critical. You don't want a Discord user's prompt to delete files from your main session's workspace. OpenClaw's sandbox system wraps untrusted agent sessions in isolated environments.

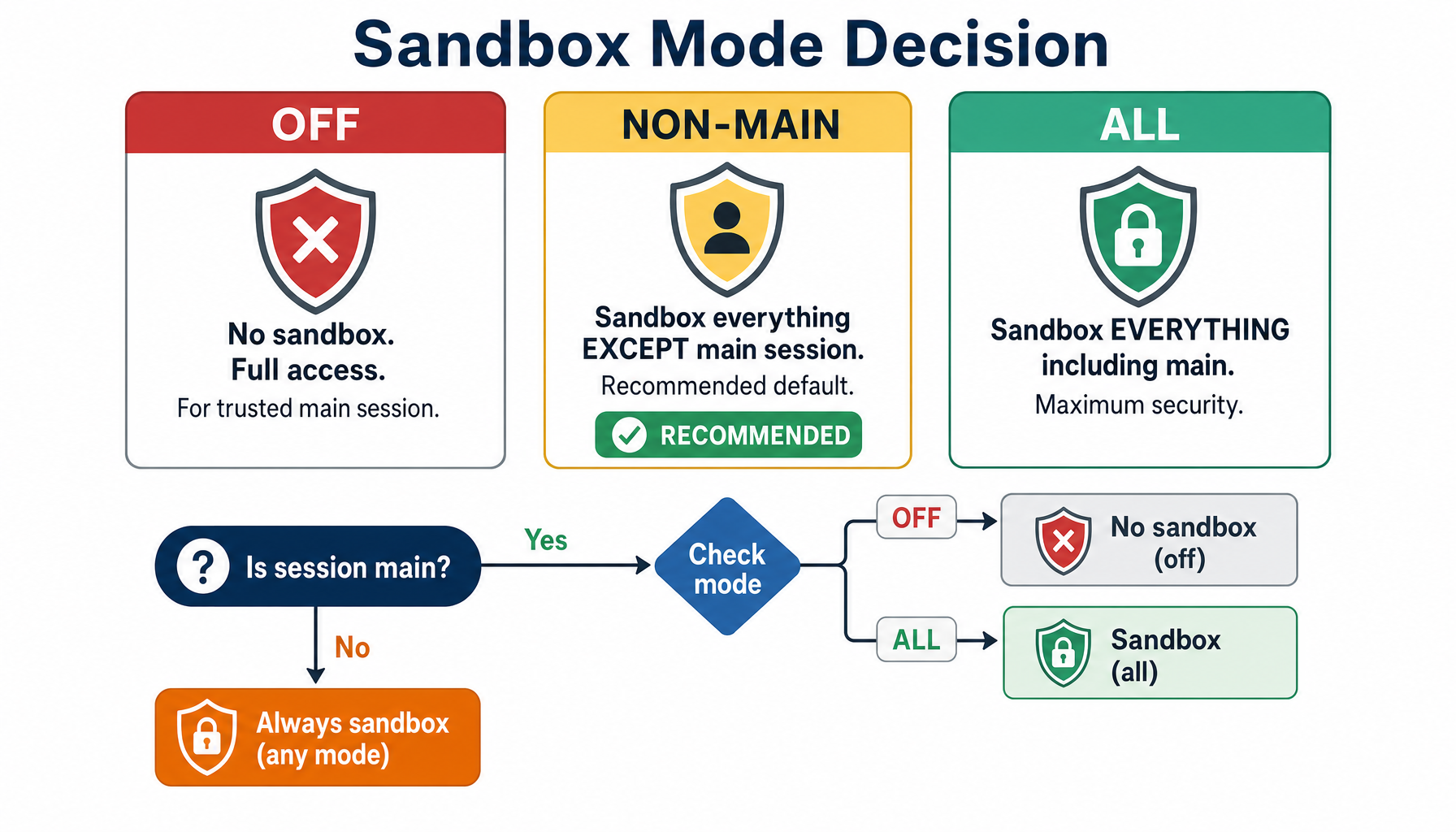

Three Sandbox Modes

- `off`: No sandbox. Full host access. For your trusted main session only.

- `non-main` (recommended): Sandbox everything except the main session. The sweet spot between security and convenience.

- `all`: Sandbox everything, including the main session. Maximum isolation.

The Backend Plug-In Architecture

OpenClaw doesn't hardcode Docker. It uses a registry pattern where any backend can register itself:

Every backend implements the same SandboxBackendHandle interface:

Docker Backend in Practice

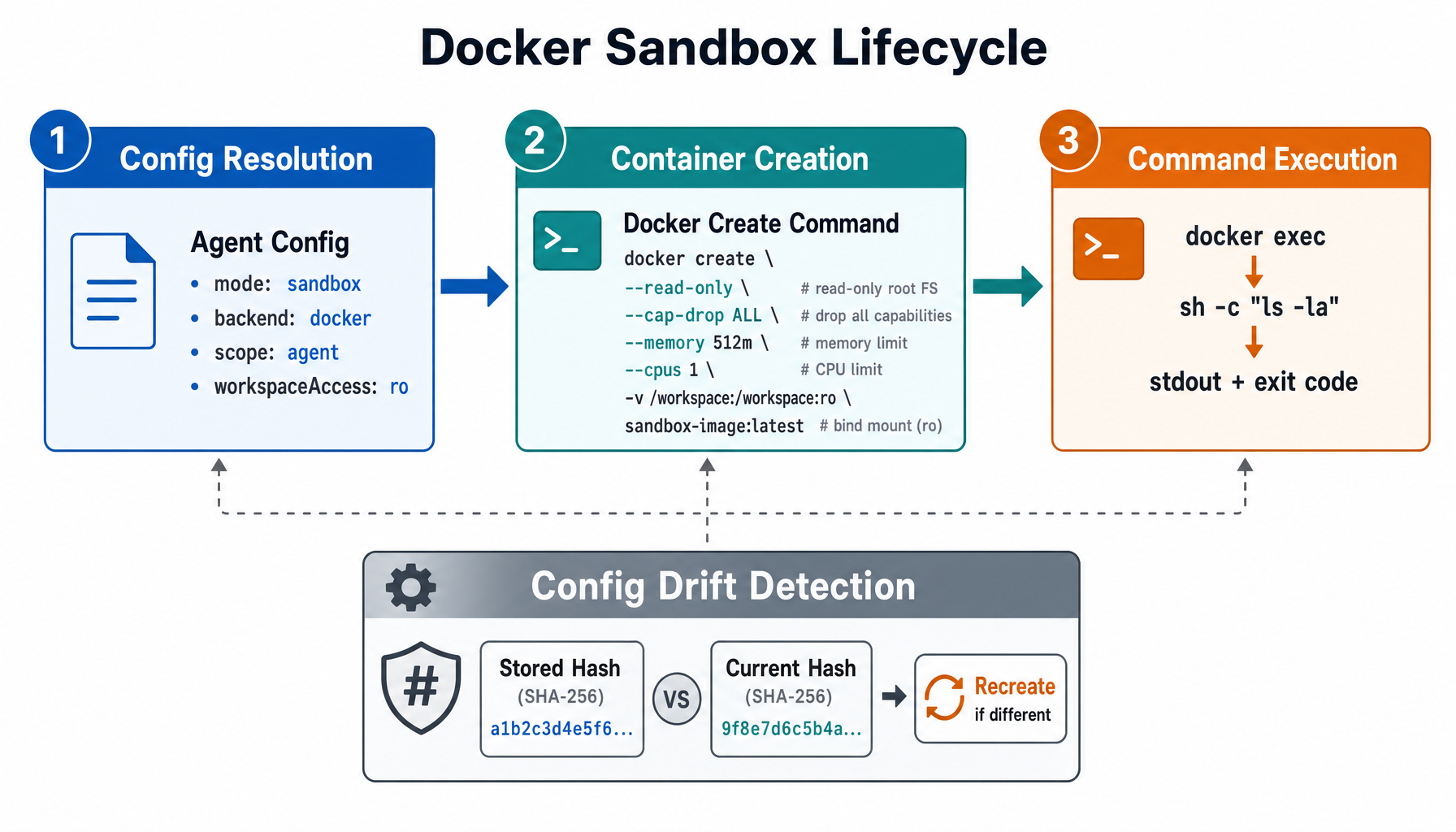

Here's the lifecycle when a Discord agent runs ls -la:

Config resolution reads the agent's sandbox settings (backend=docker, scope=agent, workspaceAccess=ro). Container creation runs docker create with hardened flags: --read-only root FS, --security-opt=no-new-privileges, --cap-drop=ALL, memory/CPU/PID limits, and a read-only bind mount for the workspace. Command execution runs docker exec with the command inside the sandboxed container.

Containers persist based on scope — "session" creates one per conversation, "agent" creates one per agent identity across sessions, and "shared" uses a single global container. Pruning runs every 5 minutes, removing containers idle over 24 hours or older than 7 days.

Config Drift Detection

What happens when you change your sandbox config but a container is already running? OpenClaw computes a SHA-256 hash of the config and compares it to the stored hash. If they differ, it warns before recreating hot (recently used) containers and silently recreates idle ones.

The Filesystem Bridge

The agent runs inside a container but needs to read and write project files. The filesystem bridge translates paths transparently:

- Host path `/home/user/project/src/app.ts` becomes container path `/workspace/src/app.ts`

- Read access uses the bind mount directly

- Write access uses an embedded Python mutation helper with atomic writes (`temp file + os.replace`)

For path safety, the bridge enforces multiple levels: assertPathSafety() rejects ../ traversal, symlinks are resolved via readlink -f and rejected if they escape the mount boundary, and O_NOFOLLOW prevents symlink-following on writes.

The SSH Backend

The SSH backend implements the same SandboxBackendHandle interface but runs commands on a remote machine. Key differences: file transfer uses tar piped through SSH instead of bind mounts, it manages remote directories instead of container lifecycles, and SSH key handling includes CRLF normalization and BOM removal.

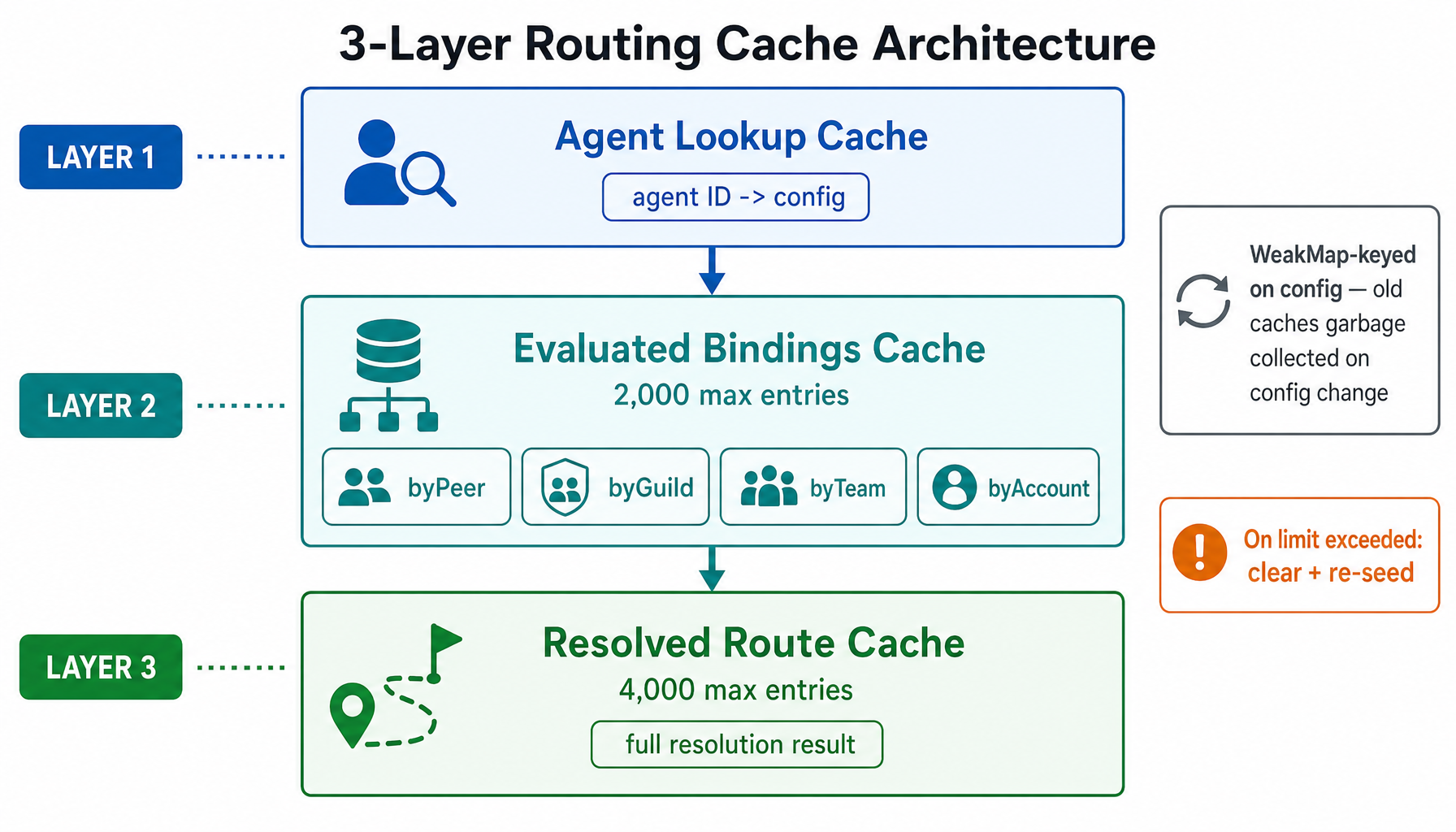

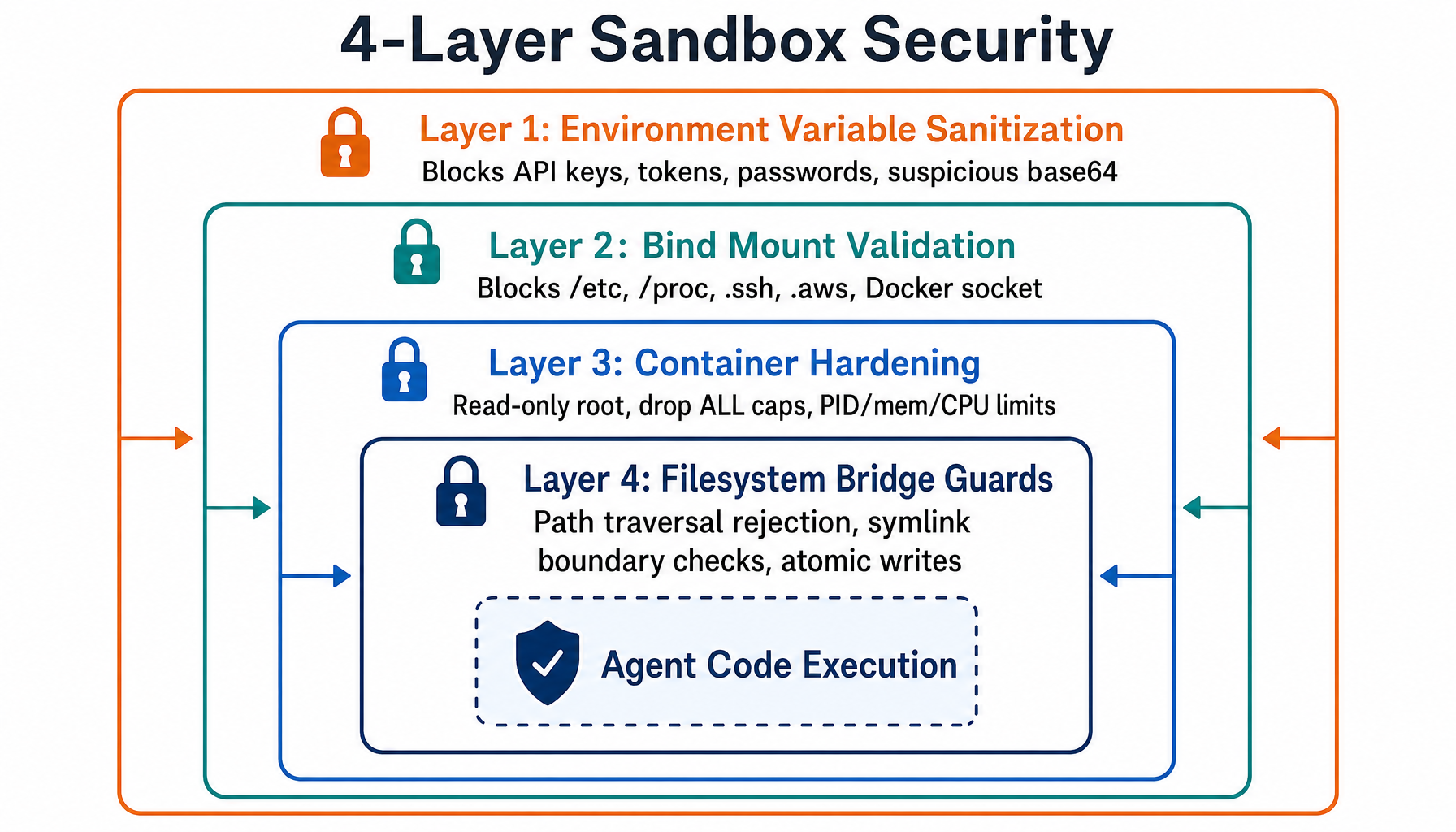

4-Layer Security Model

OpenClaw's sandbox enforces security at four simultaneous layers:

Layer 1 — Environment Variable Sanitization: Blocks patterns like _API_KEY, _TOKEN, *_PASSWORD, suspicious base64 strings (80+ chars), and null byte injection. Strict mode only allows LANG, PATH, HOME, USER, SHELL, TERM, TZ, NODE_ENV, and locale settings.

Layer 2 — Bind Mount Validation: Blocks host paths to /etc, /proc, /sys, /dev, .ssh, .aws, .docker, and the Docker socket. Also blocks relative source paths and symlinks that escape allowed roots.

Layer 3 — Container Hardening: Read-only root filesystem, all capabilities dropped, no-new-privileges security option, memory/CPU/PID limits, network isolation (no host mode), and no unconfined seccomp/AppArmor.

Layer 4 — Filesystem Bridge Guards: Path traversal rejection, symlink resolution with boundary checking, hardlink detection via inode validation, mount-aware access control (ro vs rw), and atomic writes via temp file + rename.

Tool Policy Enforcement

Even inside a sandbox, not all tools should be available. The policy system provides fine-grained control with glob patterns:

When a denied tool is called, the agent gets a helpful message with instructions on how to allow it — not just a silent failure.

Implications for Self-Hosted AI

OpenClaw's architecture reflects hard-won lessons from running AI agents across multiple platforms at scale. The 8-tier routing system handles the combinatorial explosion of users × servers × roles × platforms without becoming a maintenance nightmare. The pluggable sandbox system means you're not locked into Docker — you can run agents on remote SSH machines, and the community can contribute new backends.

For anyone building multi-platform AI assistants, these patterns — priority cascade routing, WeakMap-keyed caching, pluggable isolation backends, and multi-layer security — are directly applicable regardless of your tech stack.

FAQ

How does OpenClaw decide which agent handles a Discord thread?

OpenClaw uses Tier 2 (Parent Peer) matching. When a message arrives in a thread, the router checks whether the thread's parent channel has a binding. If the parent channel #dev-help is bound to opus-coder, all threads spawned from that channel automatically inherit the same agent — no per-thread configuration needed.

Can I use OpenClaw without Docker for sandboxing?

Yes. OpenClaw ships with both Docker and SSH sandbox backends, and the pluggable architecture lets you register custom backends via registerSandboxBackend(). The SSH backend runs agent commands on a remote machine over SSH, using tar-piped file transfers instead of bind mounts. You can also set sandbox.mode = "off" for trusted sessions that don't need isolation.

What happens when an agent tries to access sensitive files inside a sandbox?

The 4-layer security model blocks it at multiple levels. If the agent tries path traversal (../../../etc/passwd), the filesystem bridge's assertPathSafety() rejects it before it reaches the container. If a symlink points outside the mount boundary, readlink -f resolution catches it. Environment variables containing API keys or tokens are stripped before the container starts. Even if all that fails, the read-only root filesystem and dropped capabilities prevent privilege escalation.

How does OpenClaw handle sandbox config changes without breaking running agents?

OpenClaw computes a SHA-256 hash of each agent's sandbox configuration and compares it against the hash stored when the container was created. If the hashes differ (e.g., you changed the Docker image), it detects the drift. For recently-used "hot" containers, it warns before recreating. For idle containers, it recreates silently. This prevents surprise interruptions during active conversations while ensuring config changes eventually take effect.

About the Author

Aaron is an engineering leader, software architect, and founder with 18 years building distributed systems and cloud infrastructure. Now focused on LLM-powered platforms, agent orchestration, and production AI. He shares hands-on technical guides and framework comparisons at fp8.co.

Cite this Article

Related Articles

AI Coding Agent Architecture: Agent Loop Deep Dive

How Claude Code, Cursor, Aider, and Cline work under the hood. Explore the agent loop, context engineering, tool dispatch, and edit strategies that power modern AI coding agents.

AI Engineering, Agent FrameworksCline MCP Integration: Code-Level Deep Dive

See exactly how Cline implements MCP under the hood. Covers client architecture, tool discovery, JSON-RPC messaging, and spec compliance with real source code.

Agentic AI, MCP, ClineClaude Code Skills: Complete Developer Guide (2026)

Learn to build Claude Code Skills step by step. Create reusable AI instructions, templates, and automation to boost your development workflow and productivity.

AI Development Tools, Developer Productivity, Claude Code