AgentCore vs LangGraph: Agent Orchestration Compared (2026)

Compare AgentCore and LangGraph for AI agent orchestration. State management, deployment, and pricing explained with code.

AgentCore vs LangGraph: Agent Orchestration Compared (2026)

TL;DR: AgentCore is a managed AWS runtime for deploying and operating AI agents with built-in scaling, memory, and security. LangGraph is an open-source graph-based framework for building stateful, controllable agent workflows with explicit state machines. Choose AgentCore when you need managed infrastructure and production operations on AWS. Choose LangGraph when you need fine-grained control over agent execution flow, branching logic, human-in-the-loop patterns, or complex multi-step orchestration. They are complementary -- you can orchestrate agent logic with LangGraph and deploy it on AgentCore Runtime.

Key Takeaways

- LangGraph models agents as directed graphs with explicit state, enabling cycles, conditional branching, and human-in-the-loop patterns that traditional linear chains cannot express.

- AgentCore provides managed runtime, memory, code execution, browser automation, and tool gateway -- handling all infrastructure concerns for production agents on AWS.

- LangGraph's core differentiator is stateful orchestration: every node reads and writes to a typed state object, with built-in checkpointing for pause, resume, and replay.

- AgentCore's core differentiator is operational simplicity: auto-scaling, IAM security, managed memory with semantic search, and zero infrastructure management.

- LangGraph is free and open-source (MIT license); AgentCore follows AWS pay-as-you-go pricing. LangGraph Cloud offers optional managed hosting.

- The two tools solve different problems and combine well: LangGraph for orchestration logic, AgentCore Runtime for production deployment.

Introduction

AI agent orchestration in 2026 has split into two distinct camps: managed platforms that handle infrastructure, and open-source frameworks that give developers explicit control over agent behavior. Amazon Bedrock AgentCore and LangGraph represent the best of each approach, and developers building production agents need to understand when each tool is the right choice.

This comparison matters because agent orchestration is fundamentally harder than single-turn LLM calls. Real-world agents make decisions across multiple steps, maintain state between those steps, recover from failures, and sometimes need human approval before proceeding. How you handle that orchestration -- whether through a managed runtime or an explicit state graph -- determines your agent's reliability, debuggability, and operational cost.

AgentCore and LangGraph are not competing for the same layer. AgentCore is infrastructure. LangGraph is orchestration logic. But they overlap enough in developer mindshare that teams frequently ask which one to adopt, and the answer often is both.

Quick Overview

Amazon Bedrock AgentCore

AgentCore is Amazon Bedrock's managed runtime and infrastructure service for AI agents. It provides five integrated components: Memory for persistent context with semantic search, Runtime for auto-scaling agent hosting, Code Interpreter for sandboxed execution, Browser for cloud-based web automation, and Gateway for MCP-based tool integration. AgentCore abstracts away deployment, scaling, monitoring, and security, allowing developers to focus on agent logic. It is tightly coupled to the AWS ecosystem and requires an AWS account.

LangGraph

LangGraph is an open-source framework built by the LangChain team for creating stateful, multi-step agent applications. Released under the MIT license, LangGraph models agent workflows as directed graphs where nodes represent computation steps and edges define transitions based on state. Its key innovation is explicit state management: agents maintain a typed state object (using Python's TypedDict) that every node can read and write, with automatic checkpointing for persistence. LangGraph supports cycles, conditional branching, parallel execution, and human-in-the-loop patterns. It runs locally, on any cloud, or via LangGraph Cloud for managed deployment.

Comparison Table

Detailed Comparison

Architecture and Design Philosophy

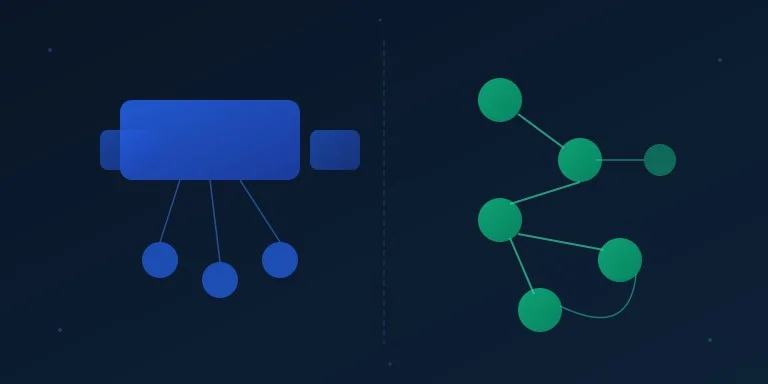

The architectural difference between AgentCore and LangGraph reflects fundamentally different answers to the question "what is an agent?"

AgentCore treats an agent as a service endpoint. You write agent logic, package it, and deploy it as a managed service that receives requests and returns responses. AgentCore handles everything around that logic: scaling, health checks, memory persistence, tool access, and security. The agent's internal decision-making process is a black box from AgentCore's perspective -- it manages the runtime, not the reasoning.

LangGraph treats an agent as a state machine. You define the agent's behavior as a graph where each node performs a computation (LLM call, tool execution, data transformation) and edges determine what happens next based on the current state. This makes the agent's decision flow explicit and inspectable. You can see exactly which node executed, what state was passed, and why a particular branch was taken.

The practical consequence: LangGraph gives you more control over agent behavior but requires you to design the graph. AgentCore gives you less control over orchestration but eliminates operational complexity.

State Management

State management is where LangGraph most clearly differentiates itself -- not just from AgentCore, but from nearly every other agent framework.

In LangGraph, state is a first-class concept. Every graph has a typed state schema (typically a TypedDict), and every node receives the current state and returns updates to it. State transitions are explicit and deterministic given the same inputs. LangGraph's checkpointer automatically persists state at every step, enabling pause/resume workflows, time-travel debugging (replaying from any checkpoint), and crash recovery. This is powerful for complex agents that need to maintain context across many steps.

AgentCore Memory provides persistent context through a different mechanism: it is a managed semantic memory service. Rather than checkpointing agent execution state, AgentCore Memory stores and retrieves contextual information using natural language queries. It supports hierarchical organization by actors and sessions, semantic search over stored memories, and custom memory strategies. This is well-suited for conversational memory and long-term user context, but it does not provide the step-by-step execution state that LangGraph's checkpointing offers.

For multi-step agents that need to pause, resume, branch, or replay from intermediate states, LangGraph's approach is more appropriate. For agents that need persistent conversational memory with semantic retrieval across sessions, AgentCore Memory is simpler to operate.

Deployment and Infrastructure

AgentCore provides a complete deployment pipeline. You write your agent, call runtime.launch(), and AgentCore handles containerization, ECR image creation, auto-scaling, health monitoring, and endpoint provisioning. The deployment target is always AWS, and you get IAM-based access control, CloudWatch integration, and managed networking out of the box.

LangGraph itself has no deployment mechanism -- it is a library you import into your Python application. You deploy LangGraph agents however you deploy Python applications: as a FastAPI service, a Lambda function, a Docker container, or any other packaging. This gives you full control but requires you to manage all operational concerns.

LangGraph Cloud bridges this gap by providing managed deployment specifically for LangGraph applications. It offers hosted endpoints, built-in persistence, streaming support, and a studio UI for debugging agent graphs. LangGraph Cloud is a paid service from LangChain Inc. and is the closest equivalent to AgentCore's managed deployment, though it is specifically optimized for graph-based agents rather than being a general-purpose agent runtime.

Pricing

AgentCore uses AWS pay-as-you-go pricing across its components: compute time for Runtime, per-operation charges for Memory, execution duration for Code Interpreter, session minutes for Browser, and API calls for Gateway. Costs are predictable and scale with usage, but can accumulate for high-throughput applications.

LangGraph is free and open-source under the MIT license. Your costs are infrastructure (servers, databases) and LLM API calls. LangGraph Cloud pricing is separate and based on usage tiers. For teams with existing infrastructure, running LangGraph directly is typically more cost-effective. For teams without DevOps resources, the combined cost of LangGraph Cloud or AgentCore may be justified by reduced operational burden.

When to Choose AgentCore

Choose AgentCore when:

- Your team operates within the AWS ecosystem and wants managed infrastructure for production agents.

- You need auto-scaling, IAM security, and CloudWatch monitoring without building them yourself.

- Your agents are relatively straightforward request-response services that do not require complex branching or stateful orchestration.

- You want managed memory with semantic search for conversational context across users and sessions.

- Your team lacks dedicated DevOps resources and needs a zero-infrastructure deployment path.

- You need managed browser automation or sandboxed code execution as part of your agent capabilities.

When to Choose LangGraph

Choose LangGraph when:

- Your agent requires complex multi-step workflows with conditional branching, cycles, or parallel execution paths.

- You need explicit state management with checkpointing for pause/resume, time-travel debugging, or crash recovery.

- Human-in-the-loop patterns are central to your agent design -- approval gates, review steps, or interactive refinement.

- You want full control over the agent's execution graph and need to inspect or modify behavior at every step.

- You are building across multiple cloud providers or need to run agents locally during development.

- Your team values open-source software and wants to avoid vendor lock-in for the orchestration layer.

- You need streaming output from intermediate agent steps, not just the final response.

Can You Use Both Together?

Yes, and this is often the best approach for production systems.

LangGraph and AgentCore operate at different layers of the stack. LangGraph defines how your agent thinks -- the orchestration logic, state transitions, and decision flow. AgentCore defines how your agent runs -- the deployment, scaling, memory persistence, and security infrastructure.

A practical architecture combines them: build your agent's orchestration as a LangGraph state machine with explicit branching, human-in-the-loop gates, and checkpointed state. Then wrap that LangGraph agent in an AgentCore Runtime application for managed deployment on AWS. You get LangGraph's controllable orchestration with AgentCore's operational simplicity.

You can also use AgentCore Memory alongside LangGraph's checkpointing: use LangGraph checkpoints for execution state (which step the agent is on) and AgentCore Memory for long-term semantic memory (what the agent remembers about a user across sessions).

Frequently Asked Questions

What is LangGraph?

LangGraph is an open-source Python framework built by the LangChain team for creating stateful, multi-step agent applications using graph-based orchestration. Unlike linear chains or simple ReAct loops, LangGraph models agent workflows as directed graphs where nodes represent computation steps (LLM calls, tool use, data processing) and edges define transitions based on the current state. It supports cycles, conditional branching, parallel execution, and human-in-the-loop patterns. LangGraph's key innovation is explicit state management with automatic checkpointing, enabling agents that can pause, resume, replay, and recover from failures. It is MIT licensed and can run locally, on any cloud, or via LangGraph Cloud for managed hosting.

How does LangGraph differ from LangChain?

LangChain is a general-purpose framework for building LLM applications with composable abstractions for chains, agents, tools, and memory. LangGraph is a specialized library built on top of LangChain's primitives that adds graph-based orchestration with explicit state management. Think of LangChain as the building blocks (model wrappers, tool interfaces, prompt templates) and LangGraph as the orchestration layer that connects those blocks into controllable stateful workflows. LangChain's built-in agent executors use simple loops (like ReAct), while LangGraph lets you design arbitrary execution graphs with branching, cycles, and checkpointing. You typically use both together: LangChain components as the nodes in a LangGraph state machine.

Can I use LangGraph with Amazon Bedrock?

Yes. LangGraph is model-agnostic and works with any LLM provider, including Amazon Bedrock. You use the langchain-aws package to connect LangGraph nodes to Bedrock models like Claude, Llama, or Mistral. You can also deploy a LangGraph agent on AgentCore Runtime for managed AWS hosting, or use AgentCore Memory for long-term context alongside LangGraph's execution checkpointing. The combination of LangGraph's orchestration with Bedrock's model access and AgentCore's infrastructure gives you a complete stack for production agents on AWS.

Which is better for complex multi-step agents?

For complex agents with branching logic, conditional paths, human approval gates, or cyclic reasoning, LangGraph is the stronger choice. Its graph-based architecture was specifically designed for these patterns, and its typed state management with checkpointing makes complex workflows debuggable and recoverable. AgentCore does not prescribe an orchestration pattern -- it provides the runtime and infrastructure for whatever agent logic you build. For complex orchestration deployed on AWS, the recommended approach is to use LangGraph for the agent's decision graph and AgentCore Runtime for managed production deployment. This gives you the best of both: controllable orchestration and operational simplicity.

Subscribe to the newsletter

About the Author

Aaron is an engineering leader, software architect, and founder with 18 years building distributed systems and cloud infrastructure. Now focused on LLM-powered platforms, agent orchestration, and production AI. He shares hands-on technical guides and framework comparisons at fp8.co.

Cite this Article

Related Articles

AgentCore vs LangChain: 2026 Framework Guide

Compare AgentCore and LangChain for AI agents. Architecture, pricing, and deployment trade-offs explained with code.

AI Engineering, Agent FrameworksAWS AgentCore Explained: 5 Tools for Production AI Agents

Complete Python walkthrough of AgentCore Memory, Runtime, Code Interpreter, Browser, and Gateway. Build enterprise AI agents on AWS without managing infra.

AI Agents, Amazon Bedrock, Conversational AIContext Engineering for AI Agents: 6 Techniques That Cut Our Costs 10x

One misplaced timestamp invalidated our entire KV cache and 10x'd our bill. Here are 6 context engineering patterns from Manus and production agent teams that prevent exactly this -- with code examples.

AI Engineering, Agent FrameworksAI Agent Memory Management: 3 Frameworks Compared (2026)

LangChain vs Bedrock AgentCore vs Strands memory systems tested side-by-side. Architecture, persistence, context recall, and scaling limits for each.

Agent Memory Management