GPT Image 2 vs Gemini 3 Pro Benchmark 2026

Compare GPT Image 2 vs Gemini 3 Pro across 8 categories. Gemini is 4x faster, GPT has better detail. Full results with outputs.

TL;DR: We ran identical prompts through OpenAI's GPT Image 2 and Google's Gemini 3 Pro across 8 categories. Gemini generates images 4.3x faster (avg 28s vs 112s) and produces larger, more detailed files. GPT Image 2 delivers tighter prompt adherence in product photography and food styling. Text rendering is strong on both — Gemini nails layout composition while GPT Image 2 captures vintage texture better. Neither model is strictly better; the right choice depends on your use case and latency tolerance.

Key Takeaways

- Gemini 3 Pro averages 28 seconds per image vs GPT Image 2's 112 seconds — a 4.3x speed advantage

- GPT Image 2 produces smaller files (avg 2.5 MB) with more compressed detail; Gemini outputs larger files (avg 3.3 MB) with more raw information

- Both models handle text rendering accurately — a major improvement over 2024-era models

- Gemini excels at illustration, architectural visualization, and compositional complexity

- GPT Image 2 excels at photorealistic skin texture, product photography lighting, and food plating detail

- For production workloads, Gemini's speed makes it viable for real-time applications; GPT Image 2 is better suited for batch processing where quality justifies wait time

GPT Image 2 vs Gemini 3 Pro: Image Generation Benchmark

Methodology

We tested both models on April 23, 2026 using identical prompts across 8 categories designed to stress different capabilities. Each model received the exact same prompt text with no model-specific optimization.

The benchmark code:

author: "Aaron"

authorTitle: "Engineering Leader & AI Infrastructure Architect"

Speed Comparison

The most dramatic difference is generation time.

Gemini is consistently 3-5x faster across every category. For applications needing real-time or near-real-time image generation (product configurators, chat-based creation tools), this gap is decisive.

author: "Aaron"

authorTitle: "Engineering Leader & AI Infrastructure Architect"

Head-to-Head: All 8 Categories

1. Photorealism

Prompt: A close-up portrait photo of an elderly Japanese fisherman mending nets at dawn, golden hour lighting, shallow depth of field, shot on Hasselblad medium format

Verdict: GPT Image 2 wins on skin texture and pore-level detail — the hands look genuinely weathered. Gemini captures the golden hour ambient lighting more naturally and has a wider, more cinematic composition. Both are remarkably photorealistic.

author: "Aaron"

authorTitle: "Engineering Leader & AI Infrastructure Architect"

2. Text Rendering

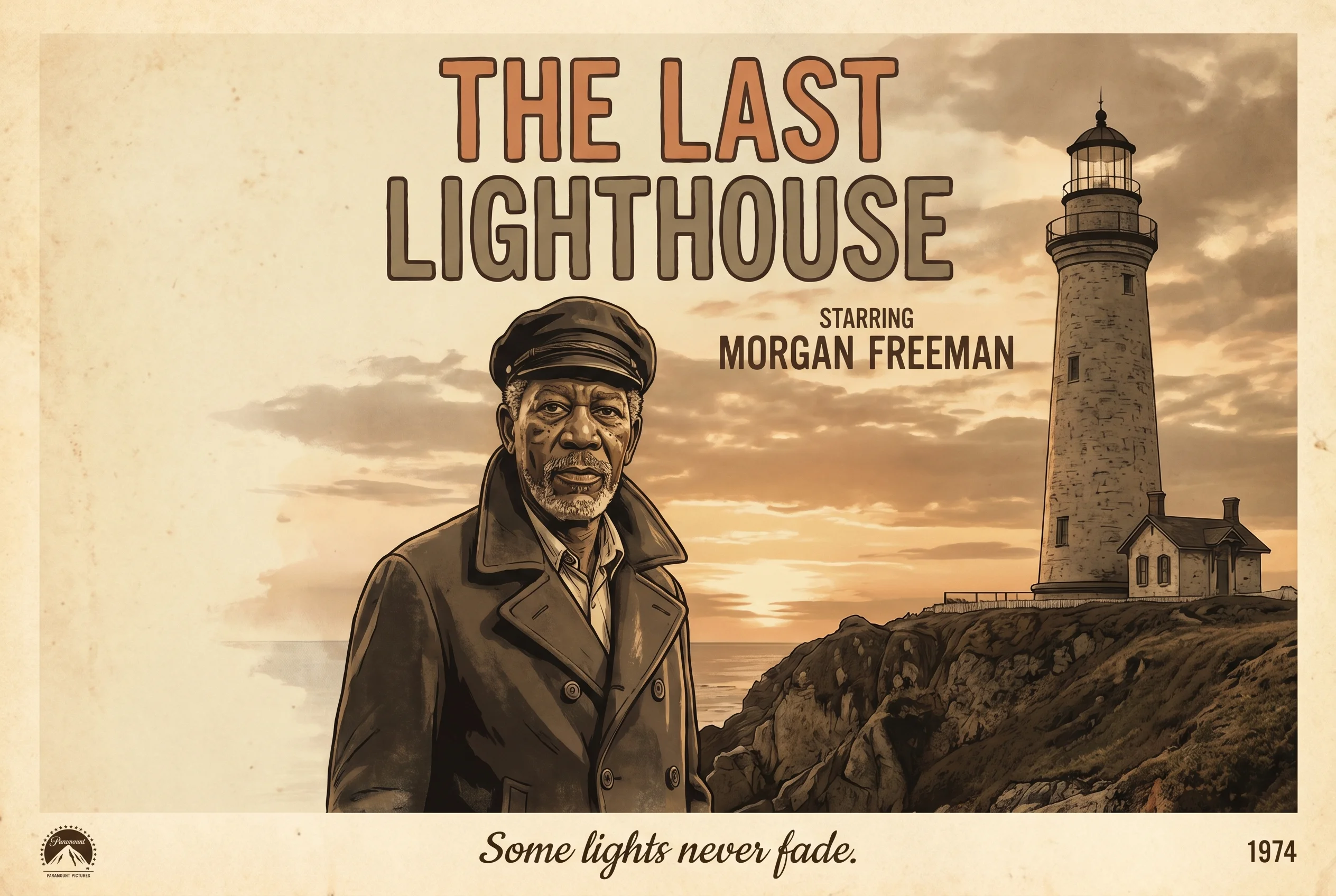

Prompt: A vintage movie poster for a film called 'THE LAST LIGHTHOUSE' starring Morgan Freeman, with the tagline 'Some lights never fade' at the bottom, 1970s aesthetic

Verdict: Both models render all text correctly — title, actor name, and tagline are spelled perfectly. Gemini produces a more polished poster layout with distinct vintage illustration style. GPT Image 2 delivers grittier film-grain texture that better captures the 1970s aesthetic. This category is a draw depending on style preference.

author: "Aaron"

authorTitle: "Engineering Leader & AI Infrastructure Architect"

3. Complex Scene

Prompt: An aerial view of a bustling night market in Bangkok, hundreds of food stalls with glowing lanterns, steam rising from woks, crowds of people walking between rows, photorealistic

Verdict: Both handle the complexity well. Gemini's output has more stalls, more people, and more visual density — closer to the "hundreds" the prompt requested. GPT Image 2 has cleaner individual details but a slightly less crowded composition.

author: "Aaron"

authorTitle: "Engineering Leader & AI Infrastructure Architect"

4. Illustration

Prompt: A children's book illustration of a fox and an owl reading a map together under a giant mushroom in a whimsical forest, watercolor style with soft pastel colors

Verdict: Both produce charming, publishable children's book illustrations. GPT Image 2 adds more character accessories (glasses, scarf, lantern, "Adventure Awaits" sign) and richer color saturation. Gemini has a softer, more ethereal watercolor quality with a wider fantasy environment. GPT Image 2 edges ahead on character design; Gemini wins on atmospheric mood.

author: "Aaron"

authorTitle: "Engineering Leader & AI Infrastructure Architect"

5. Product Design

Prompt: A sleek matte black wireless earbud sitting on a polished marble surface, studio product photography, dramatic side lighting, 8K detail, advertising quality

Verdict: GPT Image 2 delivers more convincing studio lighting with sharper reflections on the marble surface. The earbud looks like it belongs in an Apple ad. Gemini's output is good but slightly softer in the lighting contrast.

author: "Aaron"

authorTitle: "Engineering Leader & AI Infrastructure Architect"

6. Abstract Art

Prompt: An abstract painting blending elements of Kandinsky and Basquiat — geometric shapes, bold primary colors, energetic brushstrokes, chaotic but balanced composition

Verdict: Both successfully blend the Kandinsky/Basquiat styles. Gemini produces a larger, more visually complex canvas (4.5 MB vs 3.4 MB). This category is subjective — both outputs are gallery-worthy.

author: "Aaron"

authorTitle: "Engineering Leader & AI Infrastructure Architect"

7. Architecture

Prompt: A futuristic eco-friendly skyscraper covered in vertical gardens and solar panels, set against a clear blue sky in a modern cityscape, architectural visualization render

Verdict: Gemini produces a more realistic architectural render with better urban context. GPT Image 2's building is more fantastical but less grounded in real-world architectural design.

author: "Aaron"

authorTitle: "Engineering Leader & AI Infrastructure Architect"

8. Food Photography

Prompt: A perfectly plated Michelin-star dessert: chocolate sphere with gold leaf on a mirror glaze plate, raspberry coulis dots, micro herbs, dark moody food photography

Verdict: GPT Image 2 wins here — the plating composition is tighter, the coulis dots are more precise, and there's a textured crumb base that adds Michelin-level detail. Gemini's output is good but the composition is simpler.

author: "Aaron"

authorTitle: "Engineering Leader & AI Infrastructure Architect"

File Size Comparison

Gemini consistently produces 30% larger files, suggesting higher raw image information (though not necessarily better visual quality in all cases).

author: "Aaron"

authorTitle: "Engineering Leader & AI Infrastructure Architect"

Scorecard

author: "Aaron"

authorTitle: "Engineering Leader & AI Infrastructure Architect"

When to Use Each

Choose GPT Image 2 when:

- Photorealistic human portraits are the primary use case

- Product photography needs precise studio lighting

- Food styling demands fine detail

- Latency is not a constraint (batch processing)

Choose Gemini 3 Pro when:

- Speed matters (real-time applications, iterative design)

- Architectural visualization or complex scenes with many elements

- Text rendering accuracy + layout composition is critical

- Budget-sensitive (faster generation = lower compute cost per image)

author: "Aaron"

authorTitle: "Engineering Leader & AI Infrastructure Architect"

Frequently Asked Questions

Is GPT Image 2 better than Gemini 3 Pro for image generation?

Neither is strictly better. GPT Image 2 produces superior photorealism and product photography, while Gemini 3 Pro is 4x faster and better at architectural visualization and complex scene composition. The best choice depends on your specific use case: speed-sensitive applications favor Gemini, quality-critical portraits and product shots favor GPT Image 2.

How fast is Gemini 3 Pro compared to GPT Image 2?

Gemini 3 Pro generates images in an average of 28 seconds compared to GPT Image 2's 112 seconds — a 4x speed advantage. The fastest Gemini generation was 23.3 seconds (illustration); the slowest GPT Image 2 was 131.2 seconds (complex scene).

Can GPT Image 2 and Gemini 3 Pro render text accurately?

Yes, both models render text accurately in our 2026 benchmark. Title, subtitle, actor name, and tagline were all spelled correctly by both models in the movie poster test. This is a major improvement over 2024-era image generation models that frequently misspelled words.

What resolution do GPT Image 2 and Gemini 3 Pro generate?

GPT Image 2 generates at 1536x1024 pixels in high quality mode. Gemini 3 Pro generates at approximately 2K resolution with a 3:2 aspect ratio. Both produce publication-quality images suitable for web, print, and marketing materials.

Subscribe to the newsletter

About the Author

Aaron is an engineering leader, software architect, and founder with 18 years building distributed systems and cloud infrastructure. Now focused on LLM-powered platforms, agent orchestration, and production AI. He shares hands-on technical guides and framework comparisons at fp8.co.

Cite this Article

Related Articles

AgentCore vs LangChain: 2026 Framework Guide

Compare AgentCore and LangChain for AI agents. Architecture, pricing, and deployment trade-offs explained with code.

AI Engineering, Agent FrameworksBest AI Video Search Tools 2026: 10+ Tested

Which AI video search platform wins? TwelveLabs, Google Video AI, and 8 open-source tools tested on accuracy, speed, and cost.

Multimodal AI, Video SearchDeepSeek VL2 vs Janus vs JanusFlow: Architecture Deep Dive + Benchmarks

DeepSeek shipped 4 open-source multimodal models in 10 months. We break down every architecture choice -- MoE vision encoders, decoupled visual paths, rectified flow -- and show where each model beats GPT-4V.

Multimodal AI, DeepSeek